In the course of inventing new tools, sometimes you find that the old tools weren’t so bad. Sometimes they might even be better than your “improved” versions. And that might be the case with our key inflation measure.

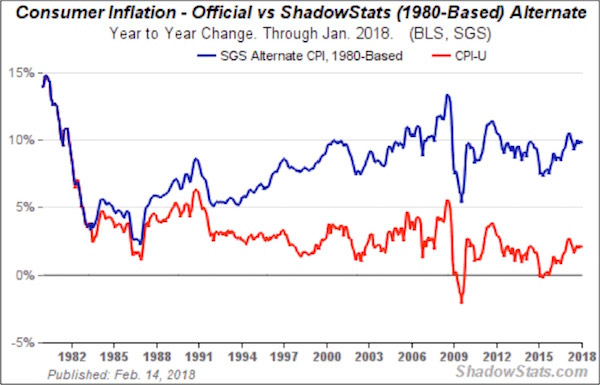

My friend John Williams at ShadowStats believes that the changes that the Labor Dept. made in the ’80s and ’90s seriously distorted the Consumer Price Index. Reverting to the pre-1980 method, he estimates that CPI inflation is running close to 10% today.

Source: ShadowStats

As I’ve noted in my recent Thoughts from the Frontline newsletters, I think it’s likely that today’s official CPI understates inflation in some categories. But does it underestimate inflation as much as John Williams thinks it does?

CPI Index Limitations

The government changed its CPI methodology because the economy evolved. And so experts believed the old methods were misleading.

Look at John’s chart above. You can see that most of the divergence happened in the 1990s. Official CPI-U growth ran in the 3–5% range, while John’s 1980 version rose from 5% to almost 10% by the year 2000. The growth rates have been nearly equal since then.

What was happening in the 1990s that might explain this? I can think of two possible key factors:

• The Internet and other rapid technological changes

• Globalization and trade agreements like NAFTA

Both of those drove prices downward, and the 1980 CPI method wasn’t designed to capture the shift. I’m just speculating, but maybe applying the old rules in a new environment resulted in the overweighting of sectors with higher price inflation.

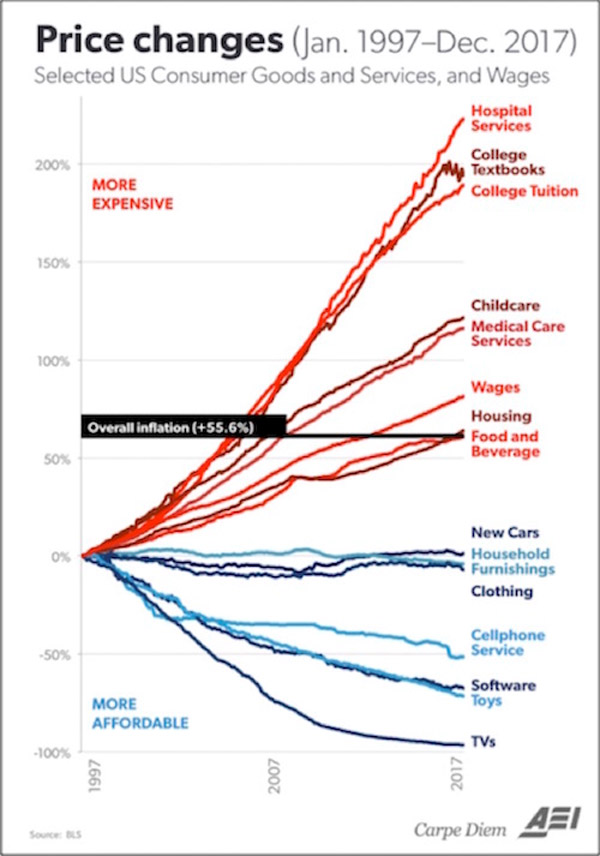

Notice in the chart below that it is the high-inflation items that are most influenced by government—things like health care and government-subsidized education. (If you think education is not influenced by the government, you are not paying attention.) The items that are not growing in price? Those are more purely market-driven.

There’s No One-Size-Fits-All Inflation Measure

No matter how you measure inflation, you are measuring the average inflation of 330 million people in the US and 741 million people in Europe. Inflation in Texas is different from inflation in New York. But I can guarantee you that inflation in Greece is even more different from inflation in Sweden or Switzerland.

The ECB is trying to maintain a single monetary policy for 340 million people, which makes their situation roughly analogous to ours—at least in terms of population.

But the differences across those countries are significantly greater than the differences among the US states. And how about the Bank of Canada? Do you think Nova Scotia, Québec, Ontario, and British Columbia have the same inflation rate? Especially if you factor in housing?

And yet the Fed and other central banks treat the data as if that were so.

Some of us are old enough to remember the rolling regional recessions of the ’80s. Nearly every region experienced a significant recession at some point, while the rest of the country was doing just fine.

And rather than creating a special monetary policy for a region, the Fed had to manage the entire country with one policy.

Whether we are talking about inflation, unemployment, or any other statistic, the elephant in the room is that the final number that makes the headlines represents highly massaged data.

That might be all right if we all treated the number as the approximate construct that it is. We don’t. People in power, like Federal Reserve officials, put far more confidence in the numbers than they should.

And the numbers that support their decisions go right into the models, which then affect the rest of us and have real, immediate impacts on the economy.

Blind Monetary Policy

You have certainly heard the story about blind men touching different parts of an elephant and describing what they think an elephant is—a rope, a fan, a tree trunk, etc.

That’s roughly how monetary policy works in both the US and other countries. A group of humans wander in the economic murk, each sensing conditions and arriving at his or her own conclusions.

We end up with policy designed by a committee of confusion. There are better ways. I would not count on our central bankers finding them because they have been trained that their data gathering and their modeling are valid, necessary, and useful.

The Fed is steeped in the theory that allowing the markets to actually set interest rates would be devastating. Instead, the process needs the all-seeing guidance of 12 people sitting around a table. How could the world possibly end up with the correct price of money (short-term interest rates and the level of the money supply) without their economic wisdom?

It is much the same as when doctors in the 1800s resisted washing their hands, even when confronted with research demonstrating that handwashing resulted in fewer infections. They believed in their superior knowledge and training.

What will it take for our own economic intelligentsia to realize that they need to wash their hands? Leaving the market to set rates by itself wouldn’t guarantee smooth sailing. Nothing can.

But it would provide better signals to the marketplace and to businesses. Purposely manipulating rates lower for long periods of time sends signals to businessmen and investors to act differently than they would otherwise do.

And that distortion creates its own set of imbalances. Which approach is worse? We may never know.

Get one of the world’s most widely read investment newsletters… free

Sharp macroeconomic analysis, big market calls, and shrewd predictions are all in a week’s work for visionary thinker and acclaimed financial expert John Mauldin. Since 2001, investors have turned to his Thoughts from the Frontline to be informed about what’s really going on in the economy. Join hundreds of thousands of readers, and get it free in your inbox every week.